Our firm takes data-driven insights and models and brings them to the field as applications. There may be dozens or hundreds of users spread over multiple locations and working in different shifts or in different jobs, with different things to solve. Over the years we have overcome many challenges to deploying optimization and we have systematized the problems, dilemmas and mitigation techniques.

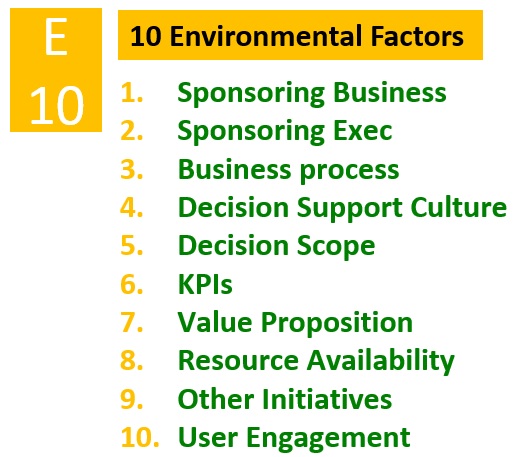

We employ a framework of 20 scorable risk factors, the “Princeton 20,” to manage our optimization projects for deployment into production. There are 10 environmental and 10 technical factors. In this post, I will address the environmental, or business, risks. In a later post, I will address the technical risks.

1. Sponsoring business. If the company is successful and growing, that is a negative risk. If things are going well, that is wind at our back because the company’s leaders are optimistic; they’re not trying to downsize, and there is more money to be spent. Our standard case, however, is that the company is consolidating and underperforming—something has gone wrong. We have some history with start-ups that aim to be the next disruptor. Their projects are often the most difficult because there are no customers or revenue.

To manage this risk, we perform careful project scope selection and management; create a matrix of centralized expertise vs. embedded team resources; focus on a track record of tangible ROI benefits.

2. Sponsoring executive. To a consultant, “sponsoring executive” is very crisp: Who is signing our check? Whom do we send our proposal to? It is low risk when the sponsoring executive runs the business into which we’re deploying our model. For us, the sponsor is often a senior manager, not necessarily the top executive. Occasionally, the sponsor is not an executive in the line of business at all, but one who leads the analytics or IT organization—this brings risk because such an executive may see the problem very rationally and differently than the way the business executive sees the problem. Also, the end users of the application typically report up through their general manager or their senior manager and not through analytics or IT.

Select mitigators: steering committee formation and regular reporting; link to stated corporate vision and other transformation advocates; explicit project tasks around broadcasting/socializing progress.

3. Business process. What business process is this model going into? It is low risk if it is well established. We typically see that the process will remain consistent, but the model will change the game. If the model will be part of a brand-new business process, that entails high risk.

Select mitigators: experienced change management resources as part of the core team; RACI charting and OKRs (Objectives and Key Results); upfront analysis of whether the model supports user performance metrics.

4. Decision support culture. Does the organization use other models for decisions that are adjacent, upstream or downstream? If so, that lends credibility. For example, if the model is helping decide whether to offer a person a job offer—upstream would be a model that decides whether, based on the resume, to call in the applicant. Sometimes we see a project at a company that doesn’t use models for this type of decision-making, which represents high risk.

Select mitigators: high attention to human-in-the-loop best practices; include real-time data validation/cleaning into the model UX; reward user champions and storytelling around innovators in the same and other industries.

5. Decision scope. The more defined the decision scope, the easier the project, and the fuzzier, the riskier. High risk doesn’t equal “bad.” A fuzzier, big problem, can offer a good risk-return.

Select mitigators: agile analytics deployment; start with self-acknowledged pain areas/scenarios first—but have a mid/long-term roadmap.

6. KPIs. Ideally, client executives have examples when they were dealing in data and the KPIs measured the model’s solution. When scheduling operations, we like to replay prior scenarios of jobs, people, the schedules built, and how waste, utilization over time, etc. were costed. We run a new optimization over them to see whether we could have done better based on the KPIs. A lot of KPIs are not measured very well; this creates risk. With scheduling, we may be trying to reduce overtime or ensure good customer service, but the client organization may not have a culture of defining the actual numbers they are trying to solve for with those KPIs. When we run an optimization, we often find extra utilization available and we may use that as a proxy for where the money is. At our firm, typically the clients have some scenarios, but the data is censored: for example, only accepted, scheduled and performed actions are shown, not actions that were refused.

Select mitigators: thoughtful workshopping to determine/validate the right KPIs; agreed upon quantitative experimental design; simulation, machine learning insights, benchmarking, SME theories/insights.

7. Value proposition. In other words, “why are we doing this?” Sometimes clients are hellbent on putting in a model although the value proposition is not clear. Often, we see the proposition that the model will make the business more efficient—executives will be able to spend less time on the small stuff and have more time to concentrate—but it is not quantified very well.

Select mitigators: perform a formal CBA (cost/benefit analysis); “contract” with the user to measure and support the model’s benefit; tie to strategic goals currently underway.

8. Resource availability. In short, the people. Are the business, IT, Analytics and other people you have to work with available for the project?

Select mitigators: socializing and broadcasting the CBA; pay high attention to the sponsoring executive and steering committee; build contingent resourcing in the project plan from the start.

9. Other initiatives. Ideally, our project is standalone or harvesting somebody to be successful running it. The worst case is that, with other initiatives overlapping, the key people to talk to are too busy. Even if the boss orders them, their organization protects them. In many organizations, there is a handful of people who know everything; they are the subject matter experts that really count.

Select mitigators: make the most skilled project managers core to team; reduce/remove intra-initiative dependencies where feasible; fully transparent weekly status reporting.

10. User engagement. It is low risk when the model’s users are employees that actually work for the executive sponsor. More likely, though, they are not directly under the sponsor’s control. They might be customers, for instance. In the high risk case, we are hopeful that the new optimization model will make users want to use our products. Think of an app offers a better job of recommending hotels. The rationale is that because it has been doing an amazing job, it will draw people in.

Select mitigators: high focus on the model UX that mirrors optimization best practices; iterative agile deployment across multiple representative user groups.

At Princeton Consultants, we score a project, before it starts, on these criteria. +1 means it’s a risk factor. ‑1 means the opposite of risk factor. 0 means neutral. This scoring is based on our firm’s experience, so when we score a risk factor a 0, we are not claiming it has neutral risk—we are claiming it is what we normally see. +1 means the factor is a little risky, a problem we have seen before. -1 means the factor is less risky than what we normally see. For each +1, our project team comes up with a mitigation strategy.

Admittedly, these risk factors are very situational, based on our firm’s view of the marketplace. I believe that simply getting a group to share a framework is far more valuable than the precise content of the framework itself. Princeton Consultants offers an assessment of an optimization project’s risk factors and recommendations to mitigate them. Contact us to learn more.

Next: 10 Technical Factors to Monitor